In the previous post, author Josh Rickard shared fundamental LLM approaches. This follow-up dives into more specific examples, including more "advanced" patterns developed over approximately one year of practical application.

Terminology

Prompting

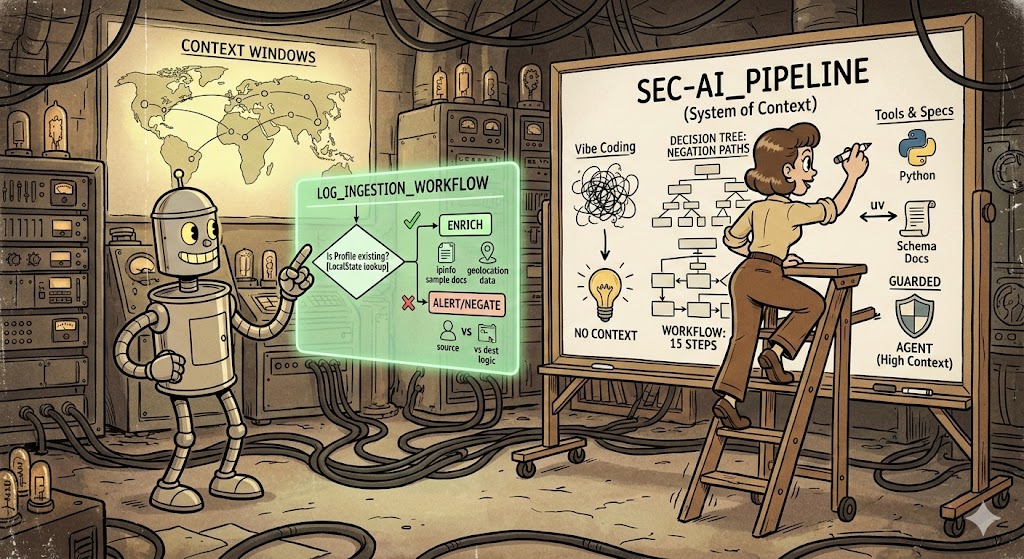

Prompting is direct interaction — providing statements with minimal context and general requirements, either as a question or problem description. The approach involves iterative cycles of question, answer, fix, validate, and test. The author distinguishes between casual "vibe coding" and the more rigorous requirement to deeply understand problems before articulating them to LLMs.

Agents

Agents involve very precise context given to an LLM — creating "guardrails for a request" through documentation, use cases, requirements specifications, and decision negations. These range from 1,000-word documents to 50,000+ character prompts with extensive contextual detail.

SKILLS.md

Markdown files capturing organizational knowledge about performing specific actions, feeding into agents for context and decision-making purposes.

Workflows

Workflows are direction documents for how an LLM should "analyze", "examine", or "perform" tasks, with defined steps including tool usage and result interpretation — similar to incident response playbooks but in markdown format.

Assistants

Assistants are the "newest evolution" — combining multiple workflows, triggering SKILLS.md files, and using tools (MCP servers) with discrete agents to perform tasks, with defined decision-making mechanisms controlled through API hooks.

Context Is Key

The more concrete outputs, decision points, paths to follow, descriptions of key terms, specifications, requirements and more in the context you provide to an LLM the better inference results.

The author demonstrates through example: a Chrome profile automation request improves significantly when adding context: "Ensure that the provided Google Chrome Profile name represents the display name shown within Google Chrome. For example, I have a profile named 'Thrunting - X organization name' and it should match the input value." Technical detail: profile display names reside in ./LocalState rather than parent directories.

Back to the Basics

You must intimately know the problem before effectively utilizing LLMs for solutions. The author recommends several important categories of context to provide:

Personas / Identities / Personalities

Describe your preferred frameworks, technologies, and design patterns to shape LLM outputs toward familiar tools and approaches rather than unfamiliar alternatives.

Perspectives & Goals

Define viewpoints (SDET perspective, red team perspective) and specific objectives (threat discovery, novel threat identification).

Tools

List available resources including MCP servers, local infrastructure, and specific technical guidelines with examples of when to employ each tool.

Documentation

Provide API specifications, JSONSchemas, repositories, organizational terminology, similar solved problems, and critically, examples of what NOT to do with specific scenarios and their explanations.

Requirements

Specify technical and business requirements, framework/language guidelines, and identify potential complications.

Advanced Example: Threat Enrichment Pipeline

The author provides a hypothetical scenario (developed in approximately 30 minutes) for building a threat enrichment pipeline. This example demonstrates comprehensive context provision:

Description: A senior security researcher and senior software engineer task: building a highly scalable threat enrichment pipeline processing 100 to 1 billion IPs daily, extracting IPv4/IPv6 addresses with contextual information, performing geolocation enrichment with required 10ms latency.

Tools Specified

- Golang

- gRPC & protobuf for inter-service communication

- PostgreSQL with ip4r extension

- pgbouncer for scalable queries

- Redis (rueidis client library) for caching

- Kubernetes with lightweight images

- Prometheus for monitoring and metrics

Requirements

- 1 billion events daily processing capability

- Complete IP extraction with contextual preservation (source vs. destination)

- Extractable format easily modified for future support

- 18-hour minimum cache retention with invalidation capability

- Encrypted communications with secure authorization between services

- ip4r-typed indexes

- Straightforward geolocation data upgrade procedures

What Not to Do: The author emphasizes — do not create separate IPv4 and IPv6 processing paths. Specifying what NOT to do is just as important as specifying what to do.

Conclusion

Individuals capable of describing problems thoroughly and efficiently will "prevail as we move into this new era of technology advancement." Effective LLM utilization requires the discipline of complete problem comprehension — comparable to project management guidance but applied to AI interaction.

You must intimately know the problem before effectively utilizing LLMs for solutions. Context is everything — the more concrete, the better the results.