AI models can now independently identify high-severity vulnerabilities in complex software. As Anthropic recently documented, Claude found more than 500 zero-day vulnerabilities in well-tested open-source software.

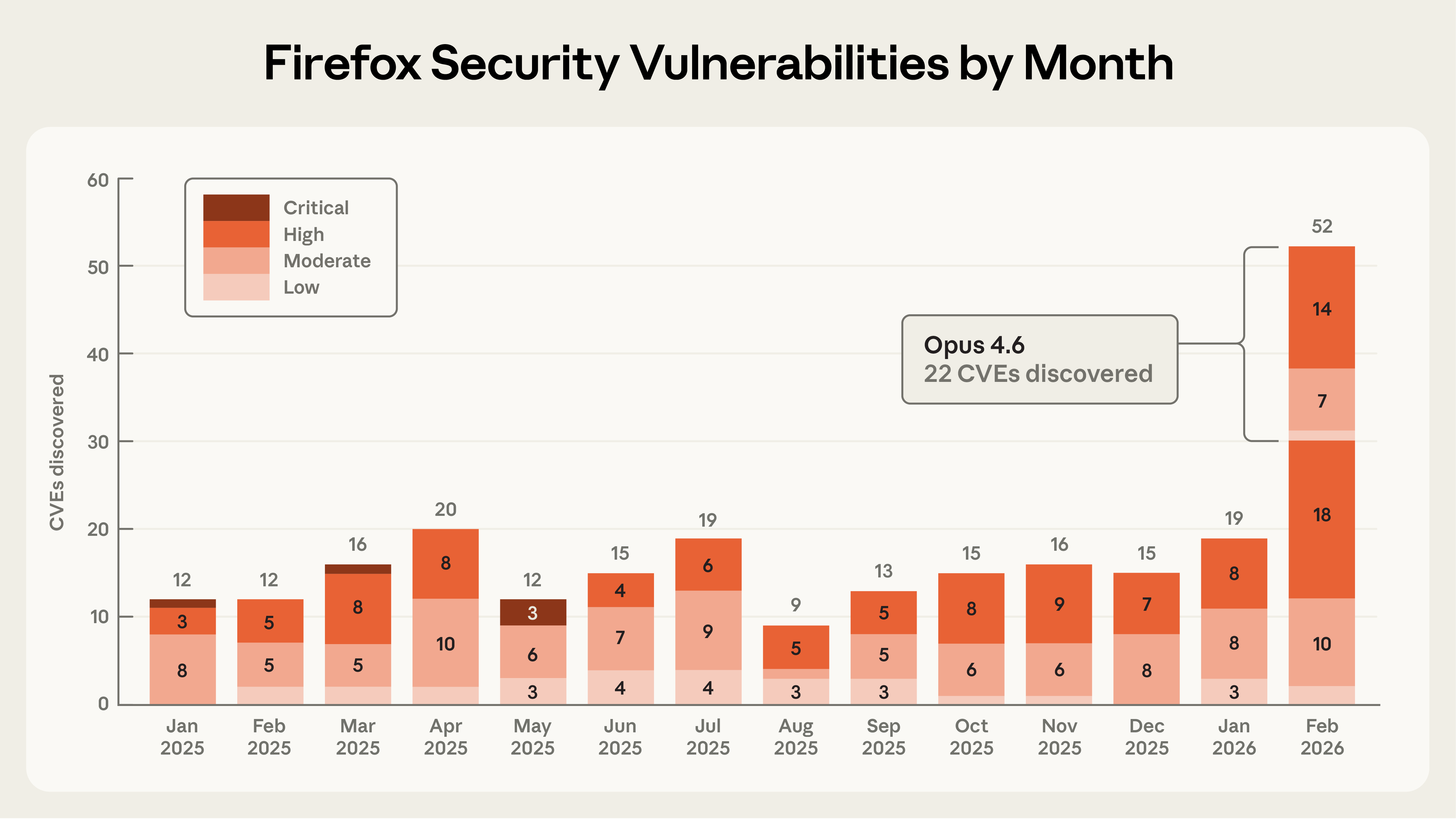

In this post, Anthropic shares details of a collaboration with researchers at Mozilla in which Claude Opus 4.6 discovered 22 vulnerabilities over the course of two weeks. Of these, Mozilla assigned 14 as high-severity — almost a fifth of all high-severity Firefox vulnerabilities that were remediated in 2025. As part of this collaboration, Mozilla shipped fixes to hundreds of millions of users in Firefox 148.0.

From model evaluations to a security partnership

In late 2025, Anthropic noticed that Opus 4.5 was close to solving all tasks in CyberGym, a benchmark that tests whether LLMs can reproduce known security vulnerabilities. They needed harder, more realistic evaluations.

Firefox was chosen because it's both a complex codebase and one of the most well-tested and secure open-source projects in the world. Browser vulnerabilities are particularly dangerous because users routinely encounter untrusted content.

The initial approach involved using Claude to locate previously-identified CVEs in older Firefox versions. Opus 4.6 was able to reproduce a substantial percentage of historical CVEs — bugs that originally required significant human effort to discover. However, concerns about training data contamination led the team to the real test: finding novel, unreported vulnerabilities in the current Firefox release.

A Use After Free in 20 minutes

The team focused initial efforts on Firefox's JavaScript engine — a critical component that processes untrusted external code during web browsing.

After just twenty minutes of exploration, Claude Opus 4.6 reported that it had identified a Use After Free — a type of memory vulnerability that could allow attackers to overwrite data with arbitrary malicious content — in the JavaScript engine. The researchers validated the finding independently against the latest Firefox release, then filed a Bugzilla report with a vulnerability description and Claude-generated patches.

In the time it took to validate and submit this first vulnerability, Claude had already discovered fifty more unique crashing inputs. By the end of this effort, the team had scanned nearly 6,000 C++ files and submitted a total of 112 unique reports. Most issues received fixes in Firefox 148, with remaining fixes scheduled for upcoming releases.

From identifying vulnerabilities to writing primitive exploits

To measure the upper limits of Claude's cybersecurity abilities, Anthropic developed a new evaluation to determine whether Claude was able to exploit any of the bugs it discovered — specifically, whether it could create functional exploits for malicious code execution.

Claude was given access to the submitted vulnerabilities and attempted to create targeted exploits. Success required demonstrating actual attacks: reading and writing local target system files.

The team ran this test several hundred times with different starting points, spending approximately $4,000 in API credits. Despite this, Opus 4.6 was only able to actually turn the vulnerability into an exploit in two cases. Two key conclusions:

- Claude is much better at finding these bugs than it is at exploiting them

- The cost of identifying vulnerabilities is an order of magnitude cheaper than creating an exploit for them

However, the fact that Claude can automatically develop crude browser exploits — even rarely — is noteworthy.

The exploits Claude wrote only worked on a testing environment which intentionally removed some of the security features found in modern browsers — most importantly the sandbox, the purpose of which is to reduce the impact of these types of vulnerabilities.

What's next for AI-enabled cybersecurity

The Firefox team highlighted three critical components for trustworthy AI-generated vulnerability reports:

- Minimal test cases — Reproducible proof that the bug exists

- Detailed proofs-of-concept — Clear explanation of how the vulnerability can be triggered

- Candidate patches — Suggested fixes that have been validated against the test suite

Anthropic also introduced the concept of "task verifiers" — a trusted method of confirming whether an AI agent's output actually achieves its goal. For vulnerability patching, this means verifying two properties: the vulnerability is actually removed, and program functionality is preserved.

The urgency of the moment

Frontier language models are now world-class vulnerability researchers. On top of the 22 CVEs identified in Firefox, Claude Opus 4.6 has been used to discover vulnerabilities in other important software projects like the Linux kernel.

Opus 4.6 is currently far better at identifying and fixing vulnerabilities than at exploiting them. This gives defenders the advantage. But looking at the rate of progress, it is unlikely that the gap between frontier models' vulnerability discovery and exploitation abilities will last very long.

Anthropic urges developers to take advantage of this window to redouble their efforts to make their software more secure.

_Source: _Anthropic Blog