Dear Readers,

Anthropic dropped Claude Opus 4.6 this week and my timeline exploded again. Same story as every model release. “Pentesters are done.” “Bug bounty is dead.” “Agentic AI changes everything.” Daniel Miessler is already calling it proof that all knowledge work is over and we need UBI by 2029. I swear I’ve read the same take 40 times by now, just with a different model name swapped in. So I figured I’d actually write down what I think, because most of what I’m reading out there is either delusional optimism or performative panic, and neither is helpful.

AI is a tool, not a replacement#

I use Claude pretty much every day. It helps me write tools, review code, draft reports, bounce ideas off of. For the repetitive stuff it’s genuinely good, and nobody got into security because they enjoy writing up “missing X-Frame-Options header” for the 500th time. But the conversation has shifted from “AI is a useful tool” to “AI replaces the whole job” and that’s where people are getting it wrong. What these models are good at is speed on known patterns. Running through checklists, identifying textbook vulnerabilities, correlating scan output, producing text that reads like a professional report. That’s useful, but it’s not hacking.

The Jolokia research I did a few years back, chaining a JNDI injection through proxy mode into RCE and then dumping heap memory to extract Basic Auth credentials, that didn’t come from any checklist. I spent hours reading documentation that was never written with attackers in mind, poking at MBeans, trying things that didn’t work until something did. The bug lived in the gap between what the software was supposed to do and what it actually did. AI doesn’t explore gaps like that, it pattern-matches on training data, and when you give it something genuinely novel it either gives up or gives you a confident answer that’s completely wrong.

The Goldfish#

If you want to understand why these things sound so smart and fail so hard, it helps to know what’s actually happening under the hood. An LLM doesn’t think, it just predicts the next word, and that’s literally all it does. Token by token, it picks whatever word is most likely to come next based on the patterns it absorbed during training. When Claude writes something that sounds like a senior pentester, it’s not drawing on experience, it’s just seen enough pentest reports to fake the tone. And the way these models get trained makes it worse. Humans rated outputs during training, thumbs up for stuff that sounded helpful and correct, thumbs down for stuff that didn’t. So the model learned to write things that sound right, not things that are right. That’s why hallucinations are so dangerous, because it never hedges or says “I’m not sure,” it just confidently produces whatever reads like a good answer, because that’s what got the thumbs up.

And then there’s memory, or really the lack of it. Every conversation starts from scratch. The model has no idea what you talked about yesterday or last week. Within a single conversation it gets a context window, basically a fixed amount of text it can hold in its head at once. Opus 4.6 gets about 200K tokens, which sounds generous until you’re deep into a pentest and realize it quietly forgot your earlier findings because they got pushed out the back. You have to keep re-feeding it context. On a real engagement where you’re building up a mental model over days, connecting a weird config file on day one to an auth bypass on day three, that’s not a minor inconvenience, it’s a wall. Your memory as a pentester is cumulative, but the model’s memory is a conveyor belt that dumps everything off the end.

I keep coming back to the goldfish analogy because it captures how it feels to work with these models on real engagements. Incredible recall within the window, zero understanding beneath it. Ask Claude to spot a reflected XSS in a straightforward PHP app and it’ll get there. Ask it why a specific business logic flaw in a payment flow lets you buy things for negative prices and it’ll hallucinate something that looks like a real finding but falls apart the second you actually read it. There’s no intuition behind it, no “that’s weird, let me dig deeper,” no gut feeling from having seen something similar in a completely different context three years ago. It’s predicting what a security report should look like, not understanding the system it’s reporting on. And the bugs that actually matter almost always live in that messy space between systems that were never designed to interact the way they do. You find those by wondering about things, not by pattern-matching.

The hype is doing real damage#

What really frustrates me is what this discourse is doing on the ground. Every week there’s another thread claiming someone used AI to find 50 bugs in 2 hours, and junior researchers take this at face value. They skip the fundamentals, point Claude at a target, and submit whatever comes out. AI-generated reports for vulnerabilities that don’t exist, duplicates of duplicates, “findings” that are just the OWASP Top 10 reworded with a target URL pasted in. The curl project killed its HackerOne bug bounty program in January specifically because of this. Their vulnerability confirmation rate dropped from north of 15% to below 5% as AI-generated slop flooded in. Programs are raising barriers to entry, and when a model hallucinates a vulnerability and someone submits it without verifying, it doesn’t just waste one triage cycle. It erodes trust across the entire ecosystem, because programs respond slower, get more skeptical, disengage from researchers. The people getting hurt most are legitimate hunters who actually do the work, because now their reports land in a pile of AI-generated garbage and take twice as long to get triaged.

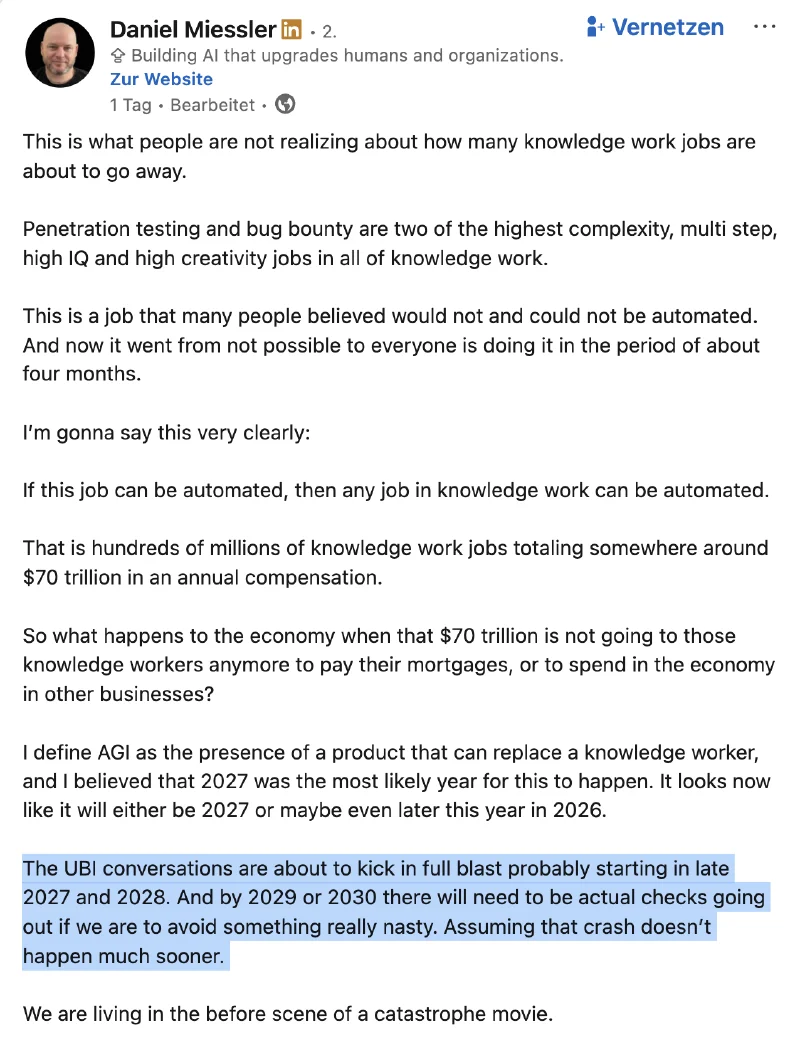

The $70 trillion leap#

Daniel Miessler posted this week that pentesting “went from not possible to everyone is doing it in the period of about four months” and used that as proof that all knowledge work, $70 trillion in annual compensation, is about to be automated. AGI by 2027, UBI checks by 2029, “we are living in the before scene of a catastrophe movie.” It’s a hell of a LinkedIn post, but it’s built on a premise that doesn’t hold up.

Pentesting didn’t go from “not possible” to “everyone is doing it.” What happened is that people pointed AI at bug bounty targets and submitted whatever came out. We just talked about how that’s going, curl killed their program over it and 95% of submissions were junk. That’s not automation, that’s a spam cannon with a confidence problem. And the logic doesn’t chain the way he wants it to. “Pentesting is one of the hardest knowledge work jobs, AI can now do it, therefore all knowledge work is done.” But AI can’t actually do it, it can produce output that looks like a pentest report, which is a very different thing. By that standard AI “automated” novel writing years ago, and nobody is confusing that with replacing novelists. You don’t get to skip from “AI can run a scanner” to "$70 trillion in displaced wages" in three sentences with nothing in between except vibes. That’s not analysis, that’s content.

Follow the money#

US ad spend alone hit $422 billion in 2025. The entire global penetration testing market is worth about $2.7 billion. That’s a 150x difference. Companies routinely spend more on a single ad campaign than on their entire annual pentest program. The industry everyone claims AI will “disrupt” was never well-funded to begin with, and when AI does make pentesting cheaper, companies aren’t going to reinvest that money into more testing. They’ll just spend less, because that’s what cost centers do.

Why pentesting though?#

This is the part I genuinely don’t get. Of all the industries to obsess over automating, why this tiny one? Marketing, legal, accounting, content production, customer support, all of those are orders of magnitude bigger and full of exactly the kind of repeatable, well-documented work that AI is demonstrably good at. A marketing agency churning out ad copy is doing textbook AI-replaceable work. So is a paralegal reviewing contracts. So is a support team answering the same 50 questions every day. Dukaan replaced their entire 27-person support team with a chatbot and cut support costs by 85%. IBM’s internal HR bot handles 11.5 million interactions a year with a 94% containment rate, meaning only about 6% ever reach a human. That’s where the real displacement is happening, and nobody’s writing dramatic posts about it, because “AI replaces customer support agent” doesn’t get the same engagement as “AI replaces hacker.”

Anthropic did $9 billion in revenue last year and is targeting $26 billion for 2026, with 80% coming from enterprise API customers. They launched Cowork with plugins for sales, legal, and finance, and security isn’t even in the top three priorities. The “AI is coming for pentesters” narrative exists because it gets clicks, not because it reflects any company’s actual roadmap.

What I actually tell people#

Use AI. It’s a good tool and it’ll get better. Get faster at the boring parts so you have more time for the interesting parts. But don’t let the hype convince you that understanding systems doesn’t matter anymore, and don’t skip the fundamentals because some guy on Twitter said AI will handle it. The ability to look at something and think “that feels off” isn’t going away with Opus 4.6 or 5.0 or whatever ships next. That’s still the job, and probably always will be.

Until next time ð